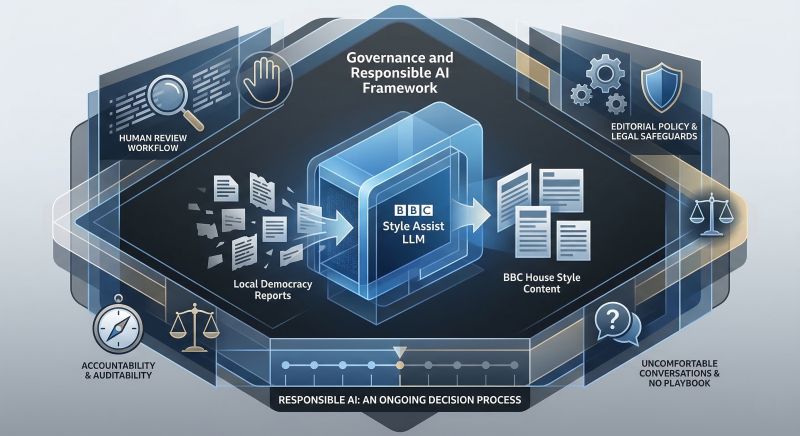

The BBC recently announced two generative AI pilots for its newsrooms. One of them is BBC Style Assist, an LLM that takes stories from the Local Democracy Reporting Service and rewrites them into BBC house style.

It sounds simple when written down. It wasn’t.

The hardest part was never just getting the model to work. The real work was governance.

The questions were immediate and uncomfortable. What happens when it gets a fact wrong? Who is liable? How do you stop journalists from trusting it too much, or too little? What counts as good enough output in a regulated public service environment where accuracy is not just a quality metric, but the whole point?

There was no playbook. We had to write one.

That meant sitting with Editorial Policy, Legal and Data Protection before a line of the tool went near a newsroom. It meant designing a human review workflow where every piece of AI-assisted content had a journalist’s eyes on it before publication, not as a box-tick, but as a genuine safeguard for accountability and auditability. It meant being honest with journalists about what the tool could and couldn’t do, and giving them space to push back.

What did I learn from it?

Responsible AI is not a compliance exercise you do once before launch. It’s an ongoing set of decisions about trust, accountability and oversight. Who decides when the AI is wrong? How do you detect drift? What does human in the loop actually mean when the loop is running at full speed?

Getting the model to work was an R&D success. Getting the governance right for the BBC newsroom was a different challenge entirely.

That’s the bit many organisations are underestimating right now.